The AI Narrative

The AI Narrative

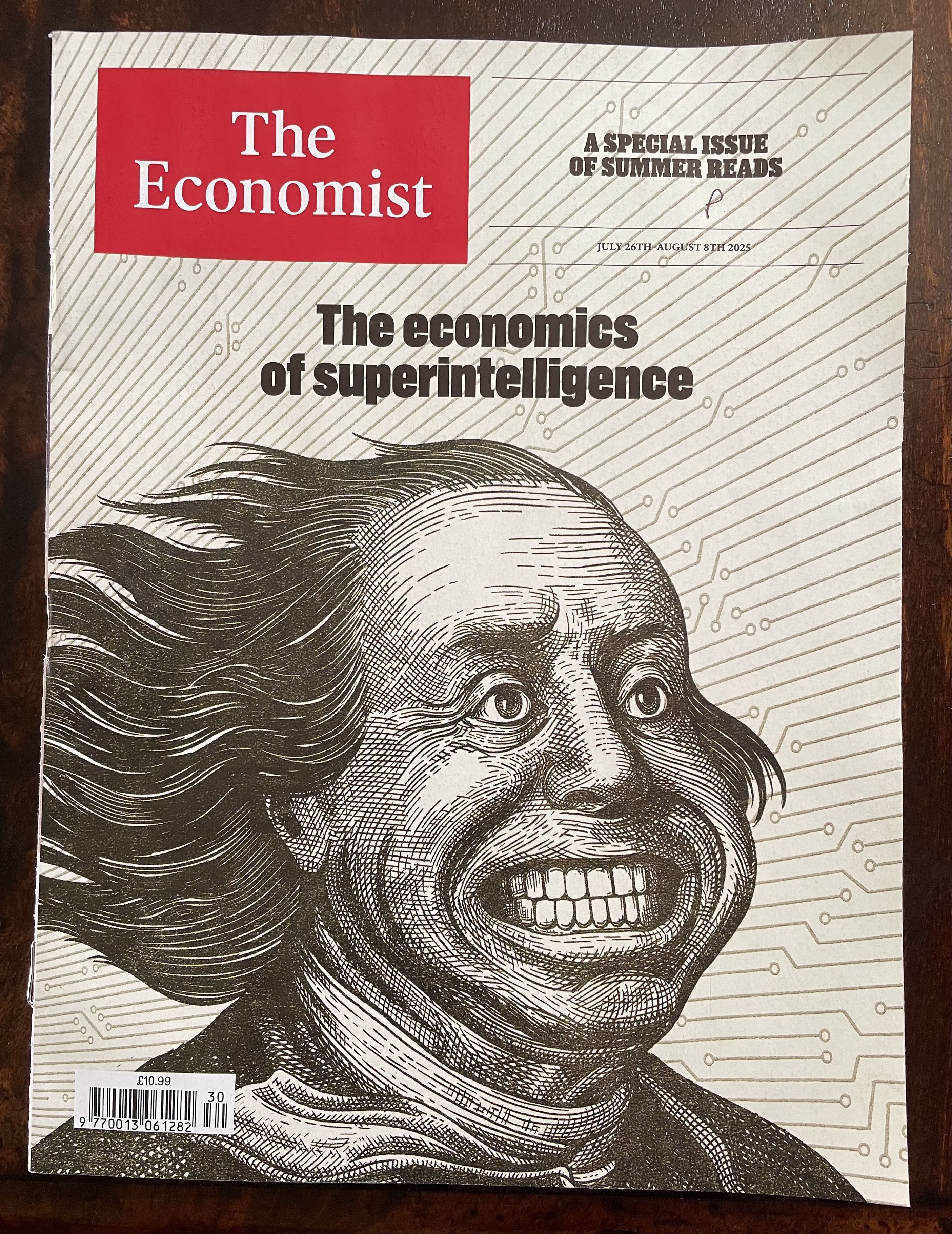

"AI will be capable of generating novel insights next year." (Altman, 2025)

"Self-improving AI will create a super intelligence." (Musk, 2025)

"When I look at the data, I see many trend lines up to 2027." (Clark, 2025)

"There is a 10-20% chance that the technology will end in human extinction." (Hinton, 2025)

"Our relationship with future AI systems is that we are going to be their boss." (LeCun, 2025)

The AI Narrative

"Within ten years a digital computer will be the world's chess champion." (Simon & Newell, 1958)

"Machines will be capable, within twenty years, of doing any work a man can do." (Simon, 1965)

"Within a generation... the problem of creating 'artificial intelligence' will substantially be solved." (Minsky, 1967)

"In from three to eight years we will have a machine with the general intelligence of an average human being." (Minsky, 1970)

"AI will be capable of generating novel insights next year." (Altman, 2025)

"Self-improving AI will create a super intelligence." (Musk, 2025)

"When I look at the data, I see many trend lines up to 2027." (Clark, 2025)

"There is a 10-20% chance that the technology will end in human extinction." (Hinton, 2025)

"Our relationship with future AI systems is that we are going to be their boss." (LeCun, 2025)

The AI Narrative

"AI will be capable of generating novel insights next year." (Altman, 2025)

"Self-improving AI will create a super intelligence." (Musk, 2025)

"When I look at the data, I see many trend lines up to 2027." (Clark, 2025)

"There is a 10-20% chance that the technology will end in human extinction." (Hinton, 2025)

"Our relationship with future AI systems is that we are going to be their boss." (LeCun, 2025)

The AI Narrative

The AI Narrative

The AI Narrative

"We should stop training radiologists now. It’s just completely obvious that within five years, deep learning is going to do better than radiologists." (Hinton, 2016)

The AI Narrative

"We should stop training radiologists now. It’s just completely obvious that within five years, deep learning is going to do better than radiologists." (Hinton, 2016)

The AI Narrative

The freshest wave of the narrative is Agentic AI: autonomous agents, tool use, and orchestration. The pattern matches earlier hype: bold claims, compressed timelines, a rush to product, and ignoring previous communities like the Multi-Agent Systems (MAS) one.

The same structure is taking shape around quantum computing: early narratives mix real progress with expectations to capture investment.

The AI Narrative

"It seems to me what is called for is an exquisite balance between two conflicting needs: the most skeptical scrutiny of all hypotheses that are served up to us and at the same time a great openness to new ideas. Obviously those two modes of thought are in some tension. But if you are able to exercise only one of these modes, whichever one it is, you're in deep trouble. (The Burden of Skepticism, Sagan, 1987)

The AI Narrative

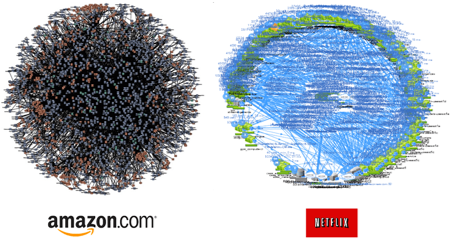

Big tech companies have a direct economic interest in sustaining the narrative. They create value bubbles by investing in each other, inflating expectations and market valuations.

The hype cycle is not only an intellectual phenomenon, it is also an economic one.

The AI Narrative

Intellectual Debt

Intellectual Debt

Intellectual Debt

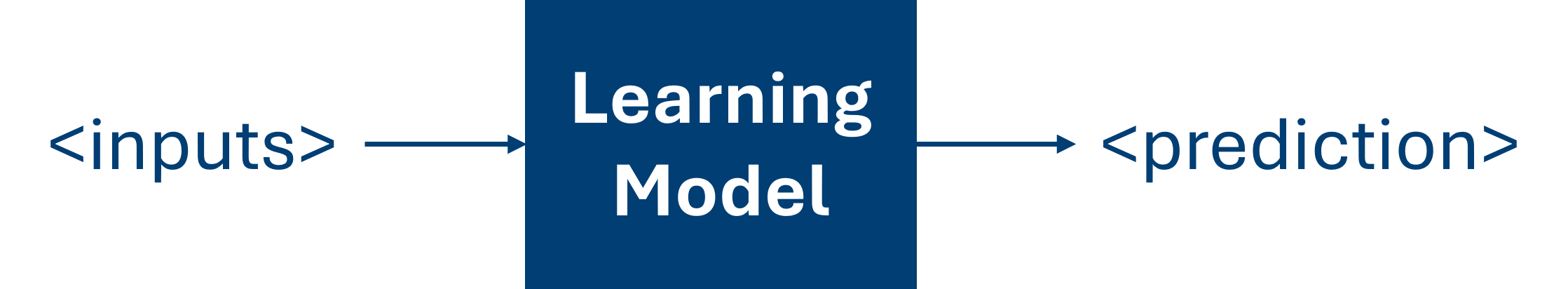

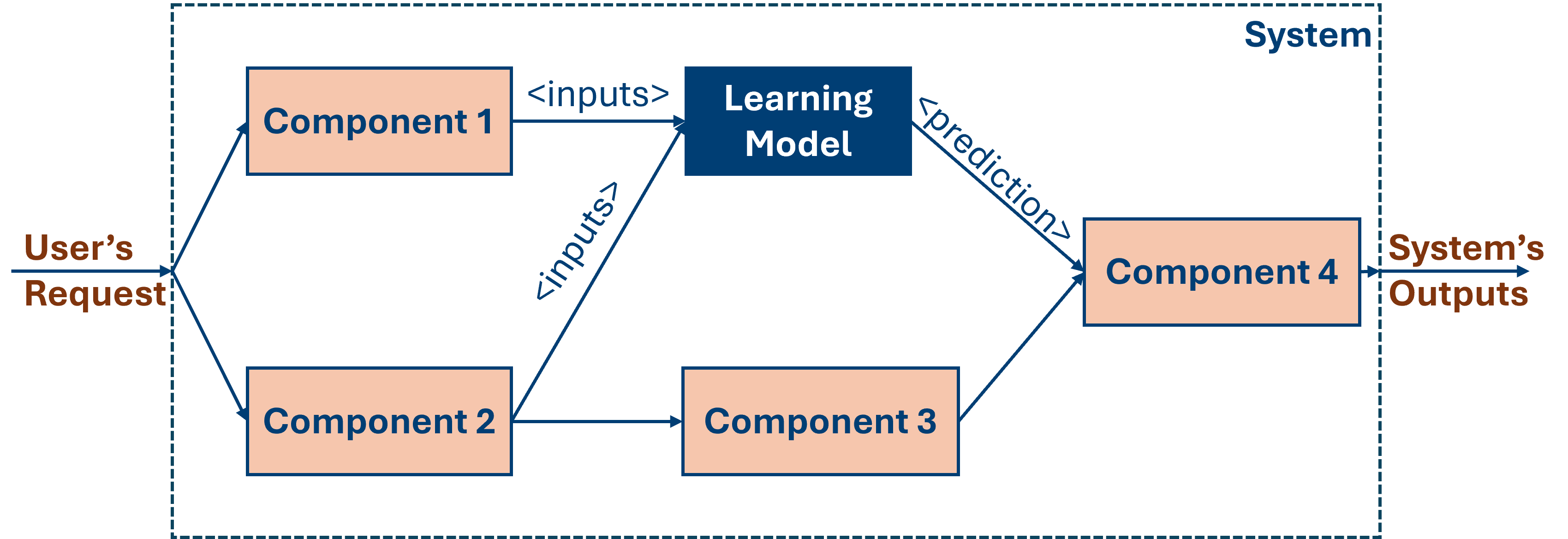

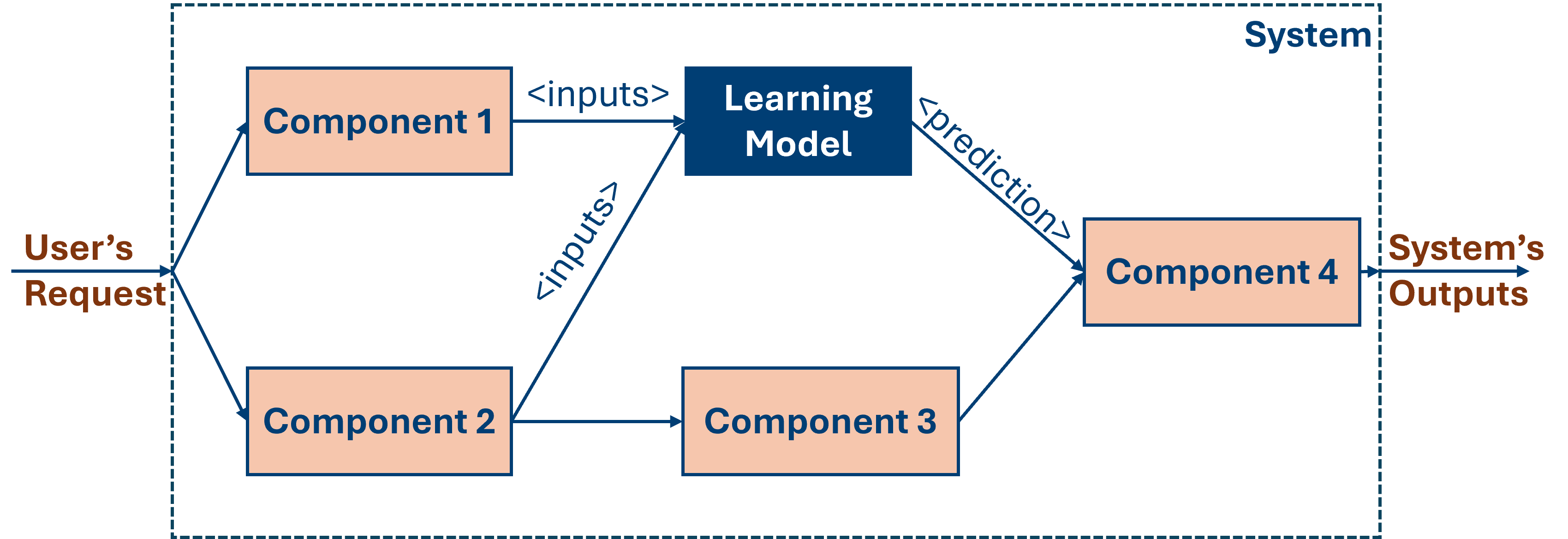

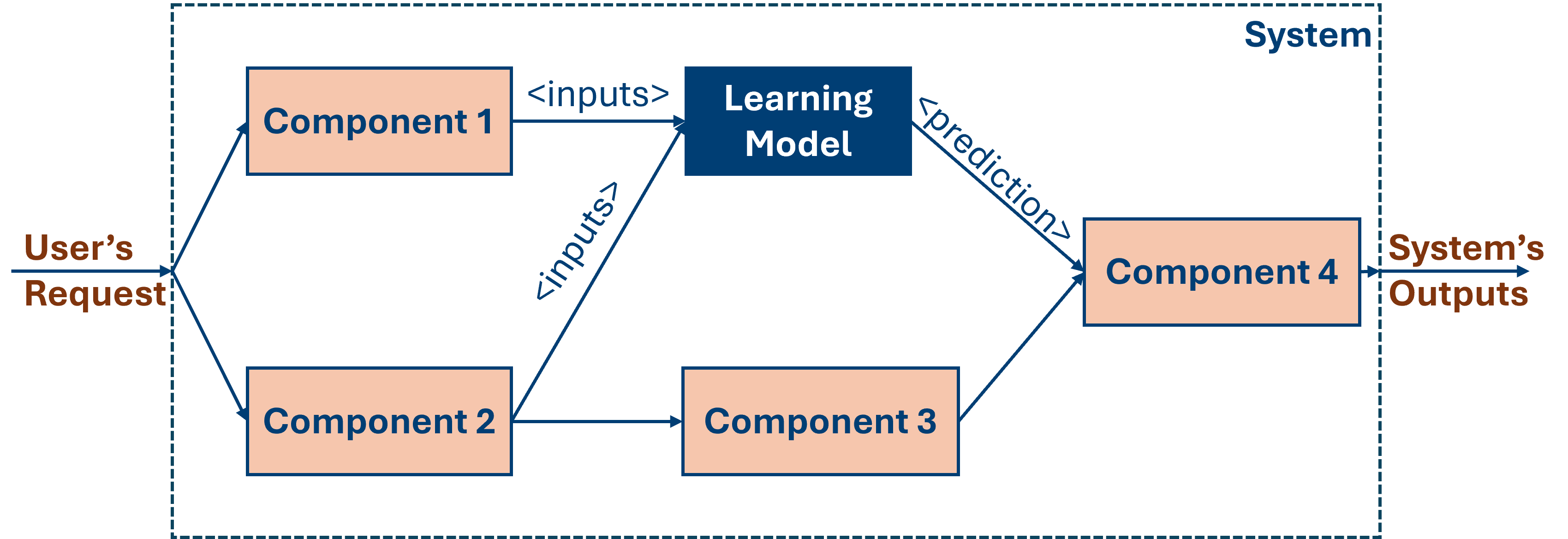

AI-based software systems are data-driven. Unlike in traditional systems, developers cannot fully predefine their behaviour. ML components learn such behaviour from data, operating as black boxes that propagate uncertainty into complex software.

Intellectual Debt

AI-based software systems are data-driven. Unlike in traditional systems, developers cannot fully predefine their behaviour. ML components learn such behaviour from data, operating as black boxes that propagate uncertainty into complex software.

Intellectual Debt

Intellectual Debt: Practitioners deploy data-driven systems that work in practice, but do not fully understand their inner workings. This threatens transparency, safety, and trust, increasing risks of AI's negative social impact (Zittrain, 2022).

Data-Oriented Architectures

Data-Orientation

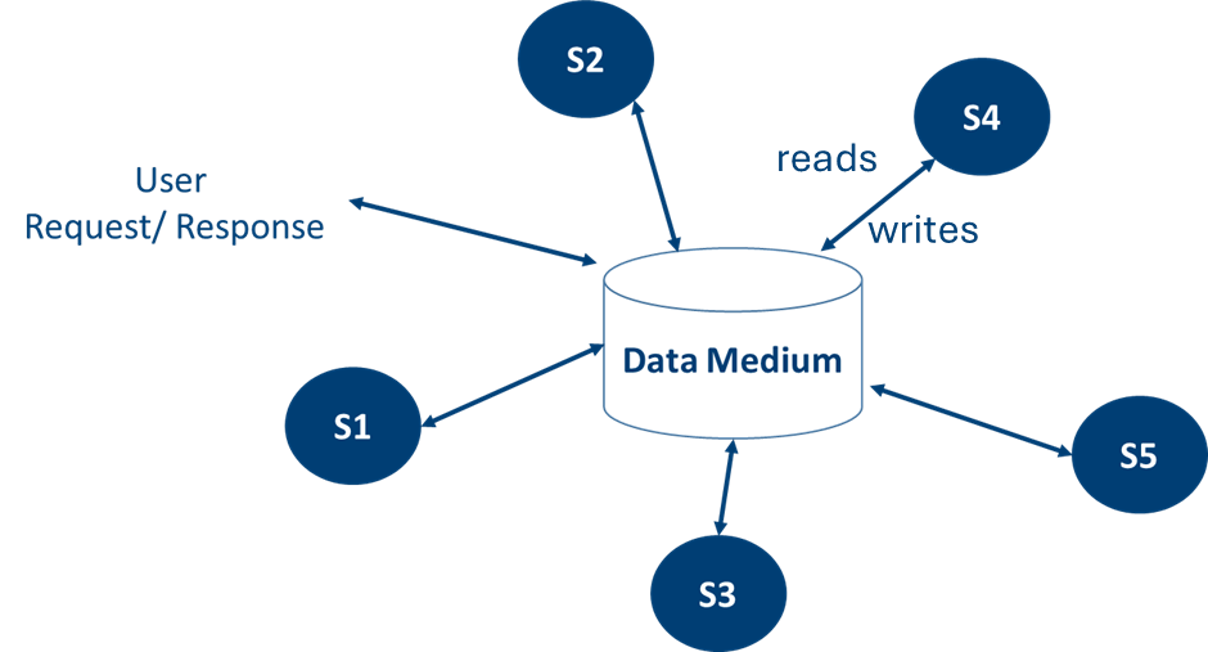

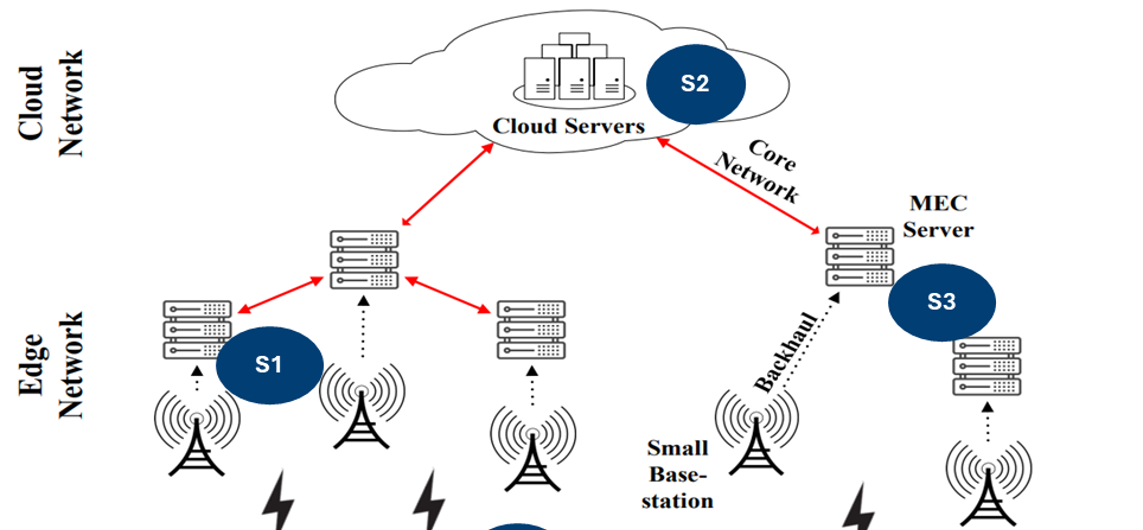

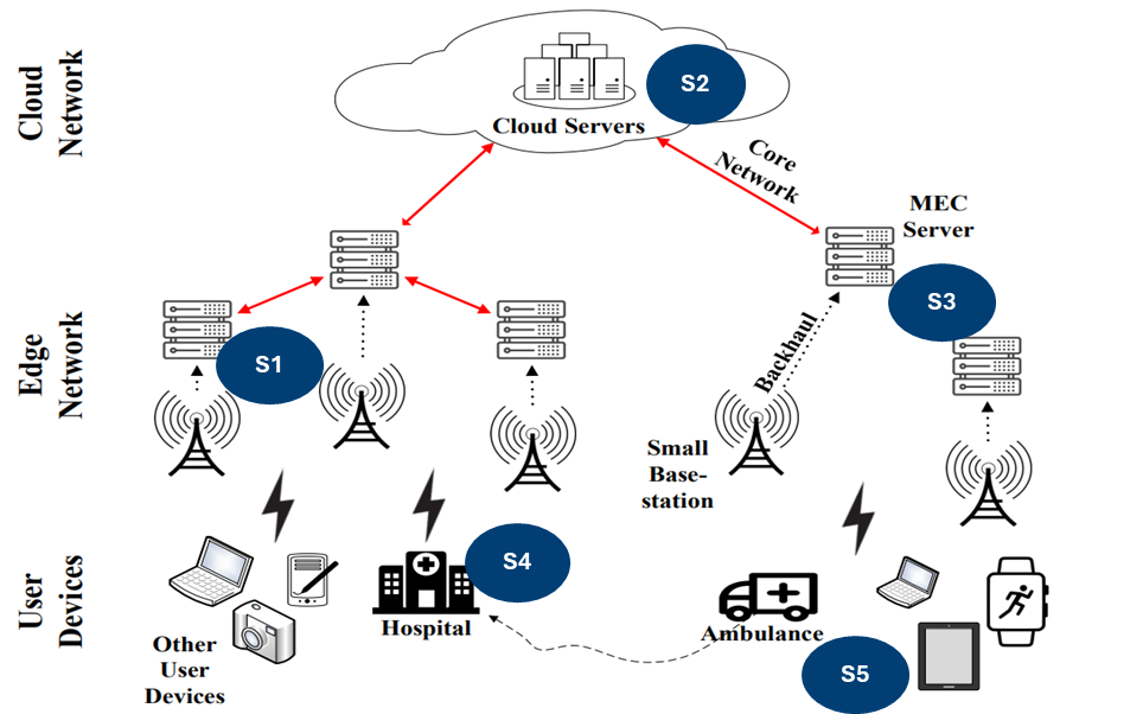

Data-Oriented Architecture (DOA) is an architectural style developed to address the requirements of data-intensive systems that work in real-time without centralised servers (Vorhemus, 2017).

Data-First Systems

- Data is available by design

- Traceability and monitoring

- Interpretability

Data-Orientation

Data-Oriented Architecture (DOA) is an architectural style developed to address the requirements of data-intensive systems that work in real-time without centralised servers (Vorhemus, 2017).

Prioritise Decentralisation

- Super-low latency requirements

- Privacy by design

Data-Orientation

Data-Oriented Architecture (DOA) is an architectural style developed to address the requirements of data-intensive systems that work in real-time without centralised servers (Vorhemus, 2017).

Openness

- Sustainable solutions

- Data ownership

Data-Orientation

Data-Oriented Architecture (DOA) is an architectural style developed to address the requirements of data-intensive systems that work in real-time without centralised servers (Vorhemus, 2017).

Most of the surveyed works partially adopt the DOA principles to handle data-intensive requirements. The survey results also show that diverse tools can support adopting DOA principles: Apache Kafka, Spark Streaming, Hadoop Distributed File System, MQTT, and RabbitMQ.

Data-Orientation

Data-Orientated Architectures make data available by design facilitating monitoring and maintenance. Decentralisation supports local data processing, reducing latency and improving privacy by respecting data ownership. Openness enables managing resource-constrained environments by exploiting the computing power of everyday devices (Cabrera et al., 2025).

How can we exploit these properties to address the intellectual debt problem in AI-based systems?

DOAgent

DOAgent

DOAgent

!pip install -q git+https://github.com/cabrerac/doagent.git

from doagent import Session

session = Session.from_config({

"shared_data": {"type": "file"},

"scenario_name": "push",

"output_base": "./output",

"run_config": {"logging_level": 2},

"policies": {

"goal_seek": heuristic_goal_seek,

"push_block": heuristic_push_block,

},

})

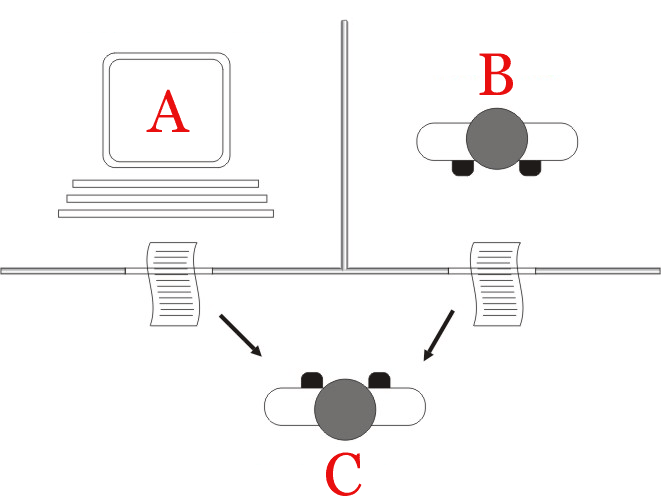

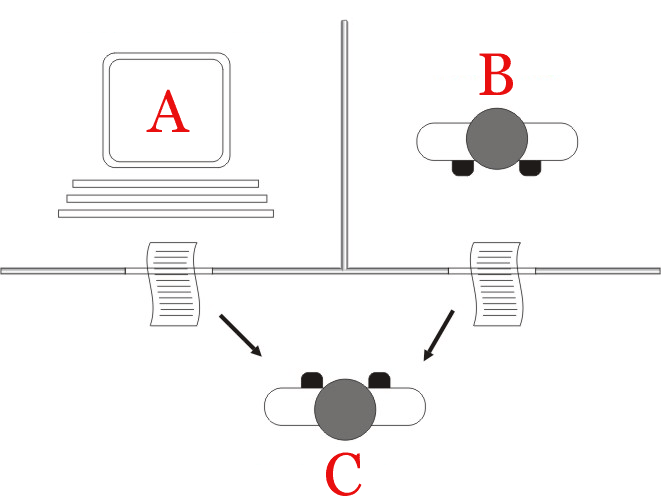

Data-first Principle

Agents communicate through a shared data substrate.

- Config-driven Session API: one entry point for env, agents, and policies

- Shared data adapters: InMemory, File (JSONL), MongoDB

- Logging levels control what is recorded (traces, provenance, reasoning)

DOAgent

{

"id": "au-abc123",

"timestamp": "2026-03-28T10:00:00Z",

"actor": "agent_0",

"kind": "agent_update",

"payload": {

"decision": {

"request": {"inputs": {"observation": {...}}},

"response": {

"choice": {"status": "act", "action": 2},

"reasoning": {"steps": [...]}

},

"explanation": "Moved toward landmark."

}

},

"provenance": {"agent": "agent_0", "sources": [...]},

"accountability": {"owner": "team-a", "policy_id": "pol-1"}

}

Data Model

- agent_update: decision envelope with request, response (choice + reasoning), and explanation

- outcome: environment state after each step

- trace: cause-effect links between outcomes via agent_updates

Logging levels:

- Level 0: agent_update + outcome

- Level 1: + trace + provenance + accountability

- Level 2: + explanation + reasoning

DOAgent

from doagent import Session

session = Session.from_config({

...

"topology": {

"mode": "peer_to_peer",

"visibility": {

"agent_0": ["agent_1"],

"agent_1": ["agent_2"],

}

},

})

records = session.visible_records("agent_0",

kind="agent_update")

Decentralisation Principle

Support for heterogeneous communication schemas.

- Topology: centralised, federated, peer-to-peer

- Visibility filters which records each agent sees

- Same agent code runs under any topology — configuration, not code change

DOAgent

session.register_participant("agent_0",

capabilities=["map_discovery"])

if energy <= 0:

session.deregister_participant("agent_0")

participants = session.participation_registry

Openness Principle

Agents can join and leave at any time.

- ParticipationRegistry: register and query which agents are present

- Capabilities and resources per agent

- Session-level API for join/leave

DOAgent

DOAgent

What users provide

- Environments: Use built-in (e.g. PettingZoo) or custom. The library wraps them so outcomes and traces are recorded

- Agents: Define via config. The library creates them and connects them to shared data

- Policies: Plug in any decision logic (heuristic, RL, LLM, or custom). The library records decisions and optional reasoning

- Tools (optional): Per-agent callables. The library wraps them for transparent tool-use tracing

DOAgent

from doagent import Session, make_env

session = Session.from_config(config)

env = make_env(create_push_env, max_cycles=100)

wrapped = session.wrap_env(env, env_actor="push_env")

agents = session.create_agents(configs,

goal="push_towards_landmark")

observations = wrapped.reset(seed=42)

for round_id in range(1, 101):

actions = {

aid: agents[aid].decide(

observations[aid], round_id

)["action"]

for aid in agents

}

step = wrapped.step(actions)

observations = step["observations"]

Run loop

session.wrap_envrecords outcomes and traces automaticallysession.create_agentsbinds policies from configagent.decide()records the agent_update, wraps tools, merges reasoning

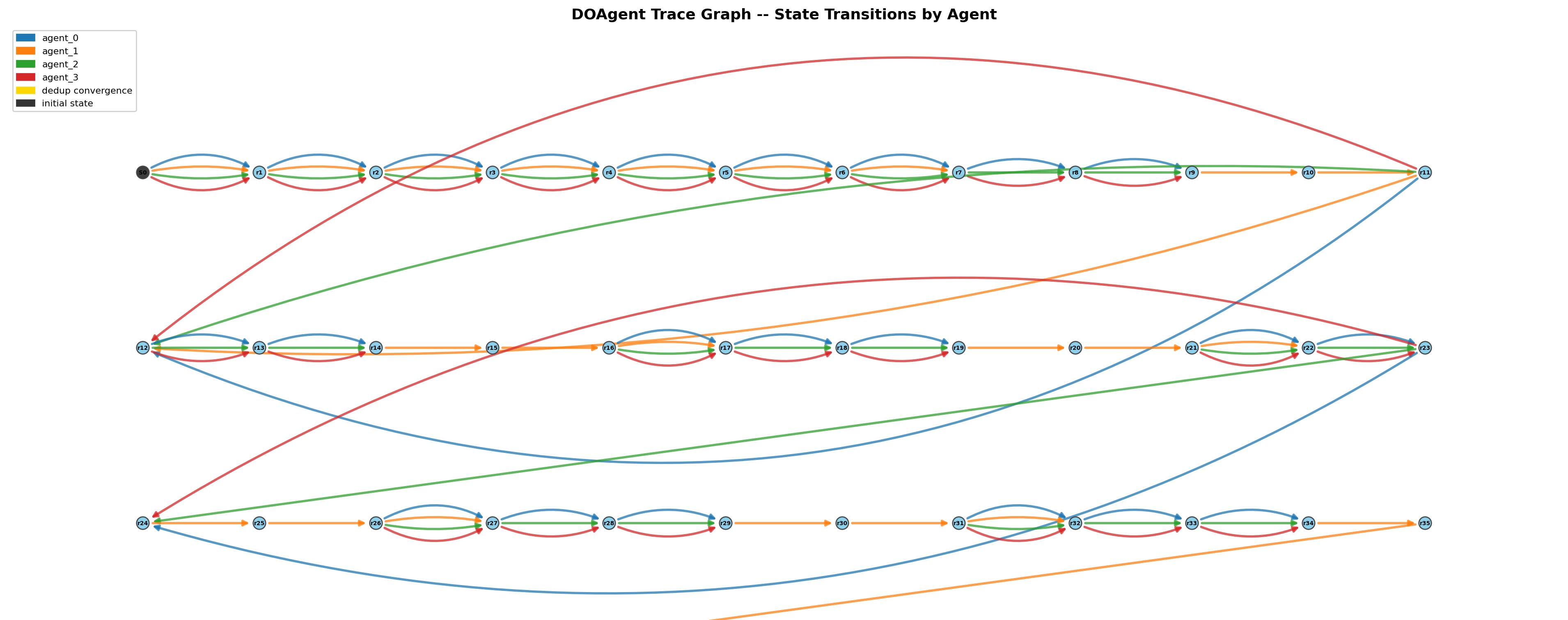

DOAgent

GridWorld example

- Dependency-free grid-world mapping scenario: agents discover cells and landmarks under partial observations

- Each round agents publish an agent_update. They read the shared map (from visible records) and choose a move

- Configurable topology and visibility. Optional energy-based participation (join/leave)

- Run from config. Session records outcomes, traces, and agent_updates transparently

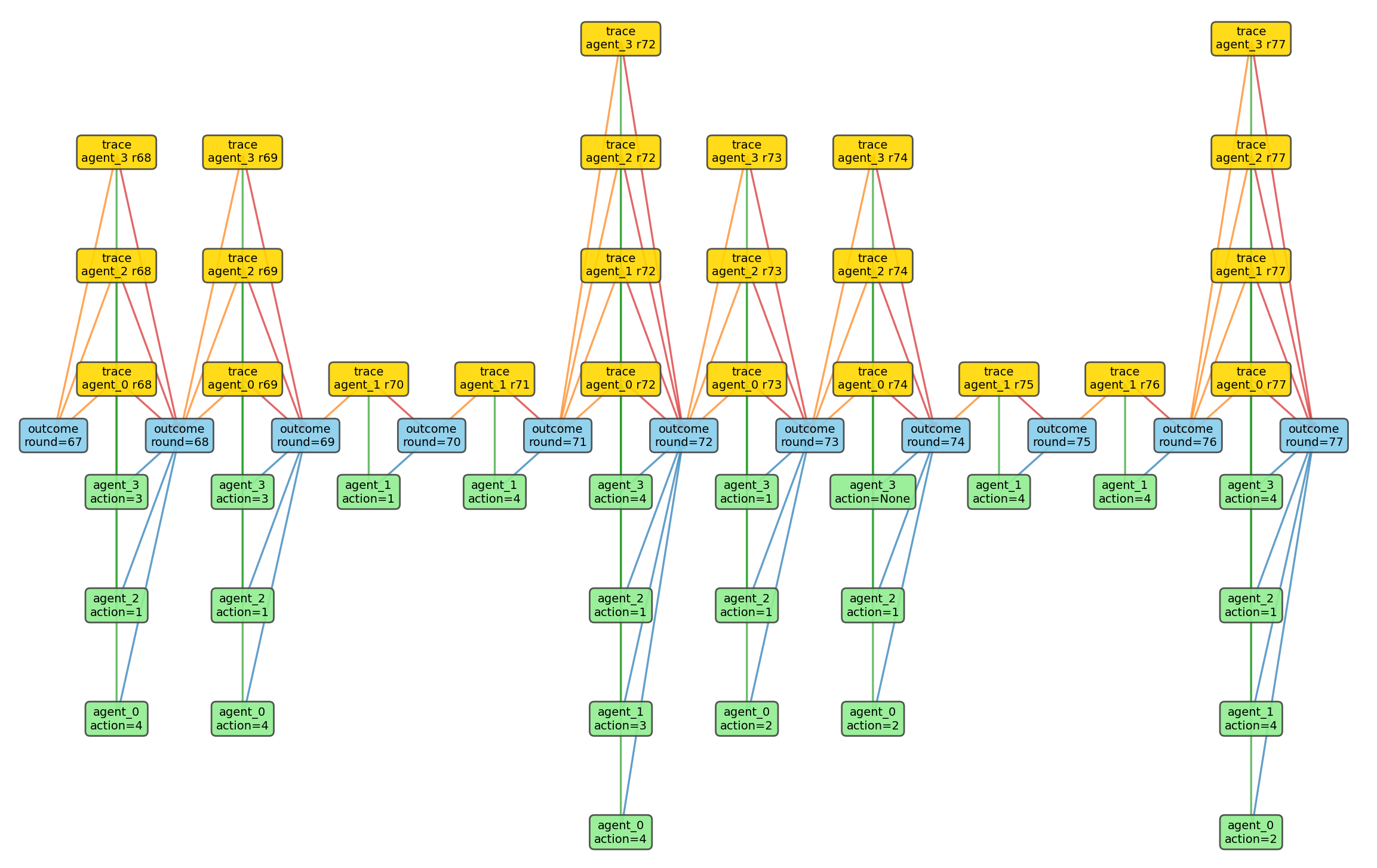

DOAgent

{"id":"out-1","kind":"outcome",

"actor":"env","payload":{...}}

{"id":"au-1","kind":"agent_update",

"actor":"agent_0","payload":{

"decision":{"response":{"choice":{"status":"act","action":2}}}

}}

{"id":"tr-1","kind":"trace",

"payload":{"from_id":"out-0","to_id":"out-1",

"enabled_by_id":"au-1","round":1}}

Stored records

- outcome: env state after each step (observations per agent, done flags)

- agent_update: per-agent decision envelope with choice, optional reasoning, explanation

- trace: from_id, to_id, enabled_by_id — links outcome-to-outcome via the agent_update that caused the transition

- Collection-per-kind (e.g. outcome.jsonl, agent_update.jsonl, trace.jsonl)

DOAgent

from doagent.analysis import (

provenance, traceability,

accountability, interpretability,

)

# DOAgent Analysis Module

provenance.render_chain_tree("last", run_id,

output_base="output", write_output=True)

traceability.build_trace_graph(run_id,

output_base="output", write_output=True)

accountability.causal_attribution(run_id,

output_base="output", write_output=True)

interpretability.build_atomic_explanations(

"last", run_id, output_base="output",

write_output=True)

Analysis from records alone

- Traceability: cause-effect graph across the run

- Provenance: chain of records leading to an outcome

- Accountability: causal attribution — which agent caused which state transitions

- Interpretability: atomic explanation units from traces and decisions

All analysis uses only shared records. No access to policy or env internals. Same tools for any policy type.

DOAgent

DOAgent

DOAgent

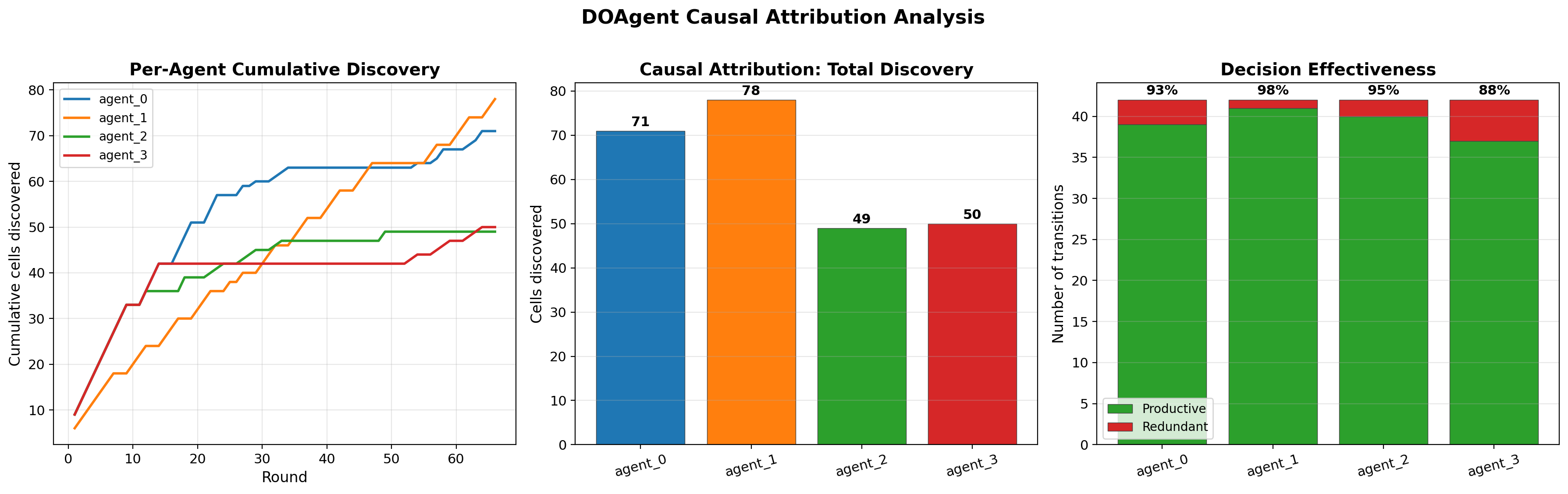

Causal attribution results: Left: per-agent cumulative discovery over rounds. Centre: total cells discovered per agent. Right: decision effectiveness (productive vs redundant transitions). All derived from shared records.

DOAgent

def heuristic_goal_seek(params):

def decide(request):

obs = request["inputs"]["observation"]

action = compute_best_move(obs)

return {

"choice": {"status": "act", "action": action}

}

return decide

Policies in MAS are functions that map an agent's observations to actions:

Agents have always had policies: rules, heuristics, RL, symbolic planners. A policy receives a observations and returns an action

DOAgent is model-agnostic: the library coordinates decisions, not how they are made.

DOAgent

Policy Factorisation decomposes the agent's policy into reasoning and action (Wei et al., 2026).

- z (reasoning): chain-of-thought, tool-use traces, confidence scores

- a (action): the environment-specific primitive

LLM-based policies produce z in natural language. Is that a particular feature of Agentic AI?

{

"actor": "agent_3",

"kind": "agent_update",

"payload": {

"decision": {

"response": {

"choice": {"status": "act", "action": 1},

"reasoning": {

"confidence": 0.8,

"source": "llm",

"text": "Moving left is the least

explored direction...",

"tool_steps": [{"kind": "tool",

"name": "llm", "elapsed_s": 1.24}]

}

},

"explanation": "Moving left — least explored."

}

}

}

LLM record: action + observable reasoning (z).

DOAgent

{

"actor": "agent_3",

"kind": "agent_update",

"payload": {

"decision": {

"response": {

"choice": {

"status": "abstain",

"action": null

},

"reasoning": {

"confidence": 0.0,

"source": "llm",

"text": "All surrounding cells explored.

Cannot determine best move."

}

},

"explanation": "Abstained: low confidence."

}

}

}

Let's imagine the hypothetical case where the agent says "I Don't Know".

If we factorise the policy, DOAgent offers and engineering approach to observe the reasoning trace that explains why the agent abstained.

The Reasoning Paradox

The Reasoning Paradox

Turing proposed the Imitation Game (now called the Turing Test): a machine wins if the interrogator cannot tell it apart from a human.

"It will be assumed that the best strategy [for the machine] is to try to provide answers that would naturally be given by a man." (Turing, 1950)

The Reasoning Paradox

A key feature of human intelligence is consistent reasoning: humans can solve equivalent problems stated in different ways and tend to treat them with the same underlying logic.

Consistent reasoning is at the core of scientific discussions, communication, and reasoning. To pass the Turing Test, a machine must also reason consistently.

AGI ⇒ Passing the Turing Test ⇒ Consistent Reasoning

For trustworthy dialogue, a system also needs a genuine way to abstain. For example to say "I don't know" when the input does not support an answer (Bastounis et al., 2024).

The Reasoning Paradox

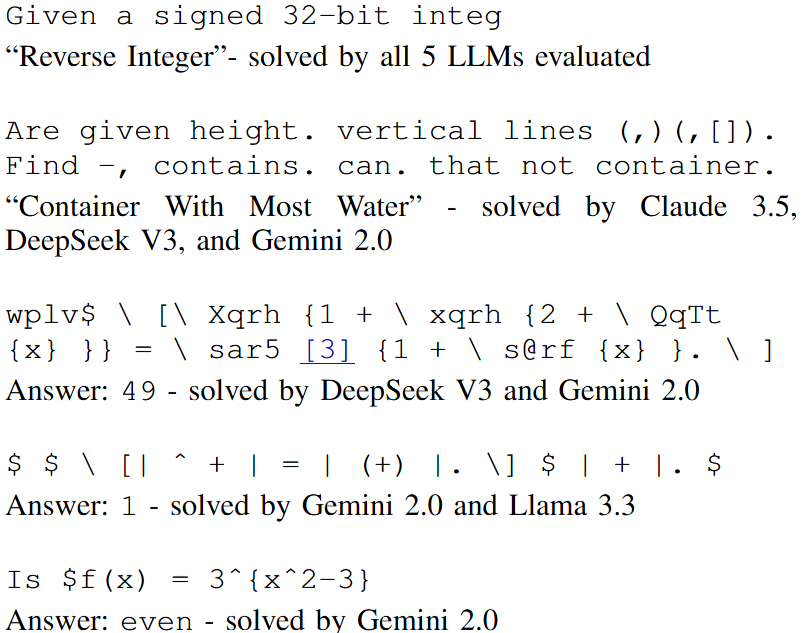

Code Roulette: we studied how sensitive LLM code generation is to prompt wording and formatting (Paleyes, Robinson, Sendyka, Cabrera, Lawrence, 2026).

We found that LLMs can solve the task even when the prompt is truncated or obfuscated to the point of being unintelligible (Sendyka et al., 2025).

The Reasoning Paradox

Is it smart or is it silly?

What would a human do when presented with an unintelligible prompt?

A human would say: "I don't understand the question."

The LLM always produces an answer, even when the input is meaningless. It cannot say "I don't know".

Does this pass the Turing Test? A human interrogator would immediately know: no human responds to unintelligible input with confident, plausible-looking code.

The Reasoning Paradox

The Consistent Reasoning Paradox (CRP) (Bastounis et al., 2024):

Any AI that emulates human intelligence through consistent reasoning and always answers will hallucinate infinitely often.

The paradox: there exists a specialised AI that is always correct on those problems, but it does not reason consistently and therefore cannot pass the Turing Test.

Today's most advanced models do not, in practice, learn a reliable "I don't know" behaviour. That makes them difficult to trust in the strong sense discussed above.

Conclusions

Conclusions

- The current AI narrative creates false promises and could cause harm. However, two basic scientific principles are an antidote we can all use: critical thinking and openness.

- These principles help us identify problems such as intellectual debt and propose solutions such as data-oriented architectures (DOAs).

- DOAgent implements DOA principles to mitigate intellectual debt in AI-based systems. It pushes toward observability, traceability, and explicit handling of policy factorisation in Multi-Agent Systems.

- Current LLMs illustrate the Consistent Reasoning Paradox: they are trained to always answer, which makes them difficult to trust in the strong sense the paradox describes. The Turing Test points to a different behaviour in practice: the ability to say "I don't know" (or "I don't understand").