The AI Narrative

"AI will be capable of generating novel insights next year." (Altman, 2025)

"Self-improving AI will create a super intelligence." (Musk, 2025)

"When I look at the data, I see many trend lines up to 2027." (Clark, 2025)

"There is a 10-20% chance that the technology will end in human extinction." (Hinton, 2025)

"Our relationship with future AI systems is that we are going to be their boss." (LeCun, 2025)

The AI Narrative

"Machines will be capable, within twenty years, of doing any work a man can do." (Simon, 1965)

"In from three to eight years we will have a machine with the general intelligence of an average human being." (Minsky, 1970)

"In medicine, management, and the military — indeed in most of the world's work — the daily tasks are those requiring symbolic reasoning with detailed professional knowledge." (Feigenbaum, 1982)

"AI will be capable of generating novel insights next year." (Altman, 2025)

"Self-improving AI will create a super intelligence." (Musk, 2025)

"When I look at the data, I see many trend lines up to 2027." (Clark, 2025)

"There is a 10-20% chance that the technology will end in human extinction." (Hinton, 2025)

"Our relationship with future AI systems is that we are going to be their boss." (LeCun, 2025)

The AI Narrative

"AI will be capable of generating novel insights next year." (Altman, 2025)

"Self-improving AI will create a super intelligence." (Musk, 2025)

"When I look at the data, I see many trend lines up to 2027." (Clark, 2025)

"There is a 10-20% chance that the technology will end in human extinction." (Hinton, 2025)

"Our relationship with future AI systems is that we are going to be their boss." (LeCun, 2025)

The AI Narrative

The Scientific Method

"It seems to me what is called for is an exquisite balance between two conflicting needs: the most skeptical scrutiny of all hypotheses that are served up to us and at the same time a great openness to new ideas. Obviously those two modes of thought are in some tension. But if you are able to exercise only one of these modes, whichever one it is, you're in deep trouble. (The Burden of Skepticism, Sagan, 1987)

The "Technocentric" View

The Systems View

The Systems View

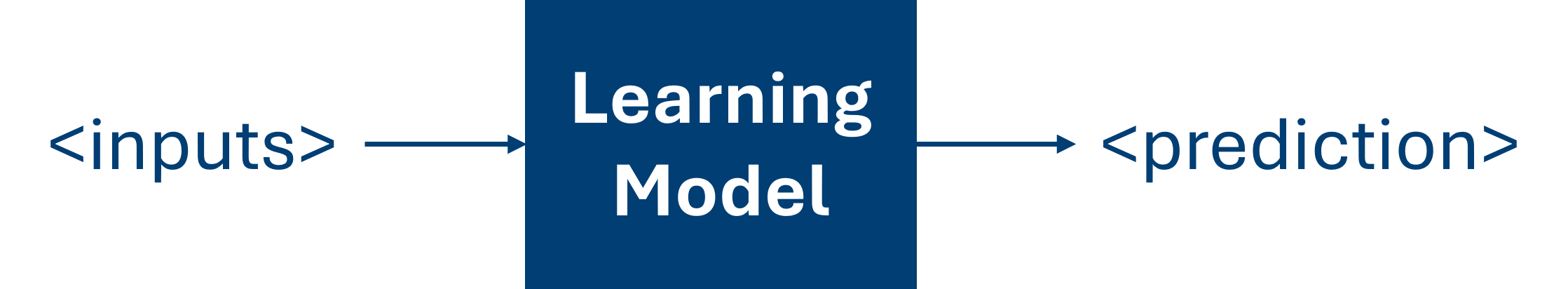

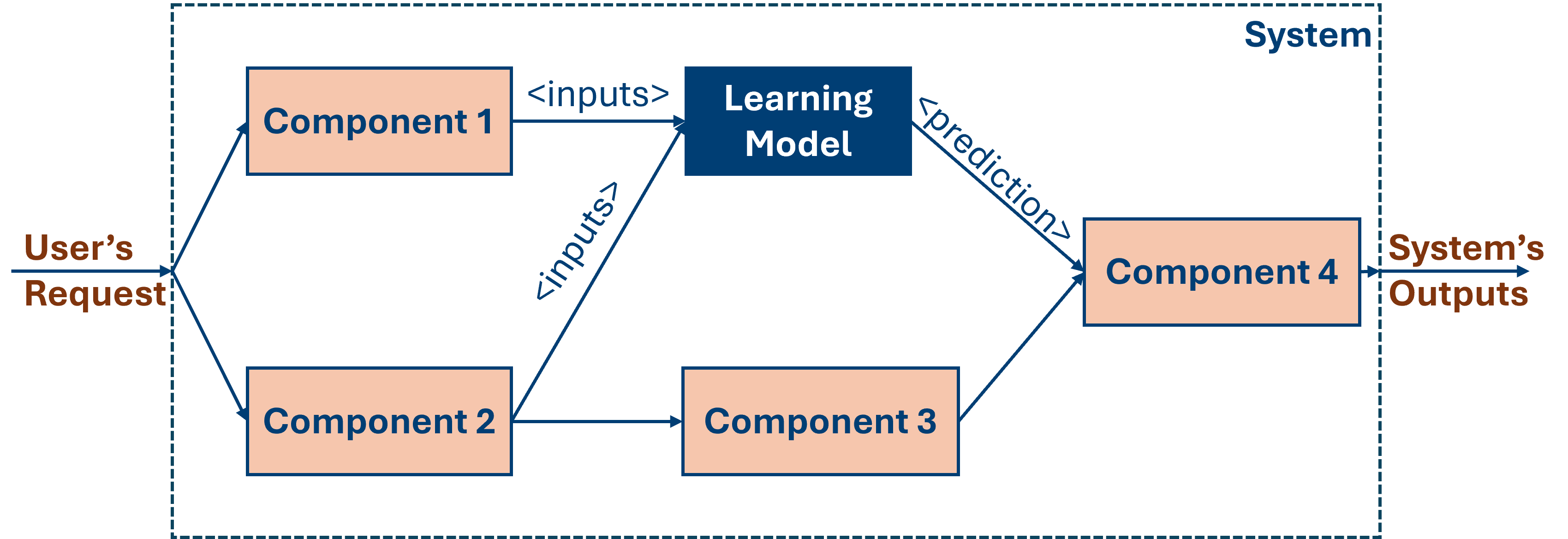

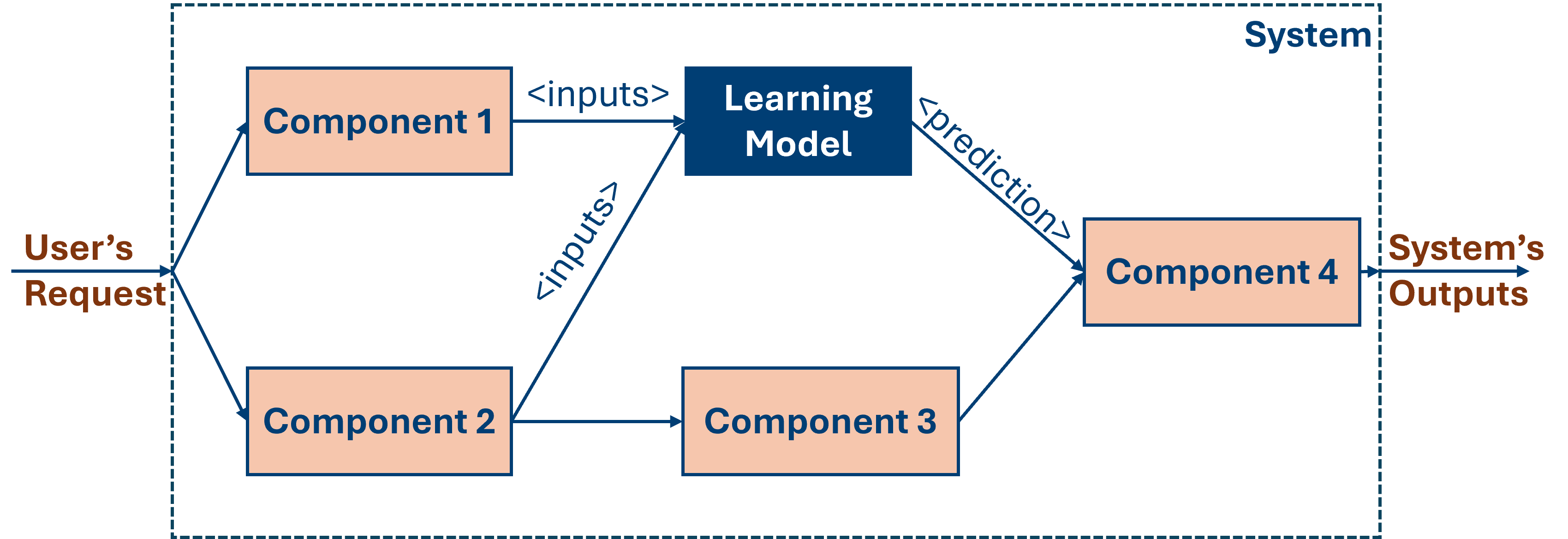

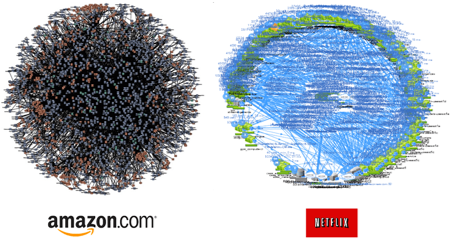

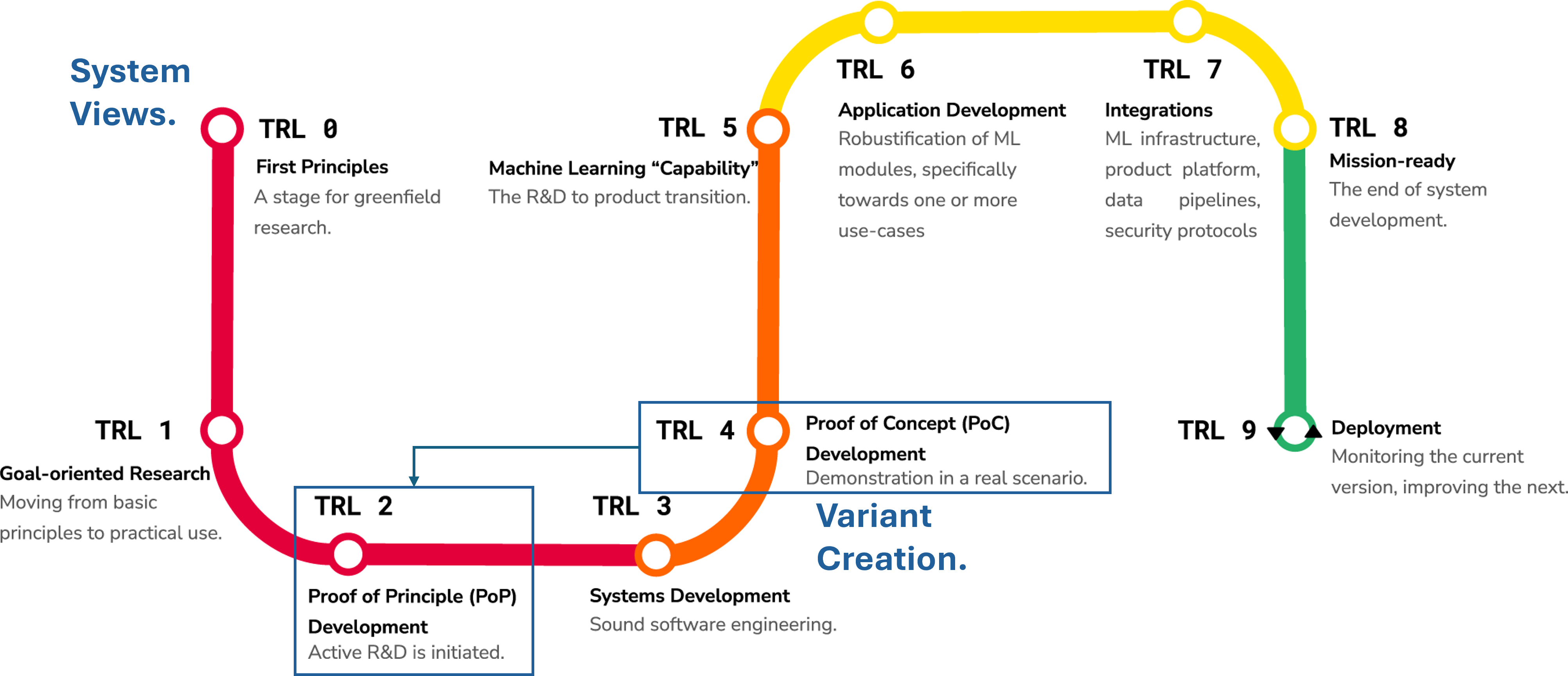

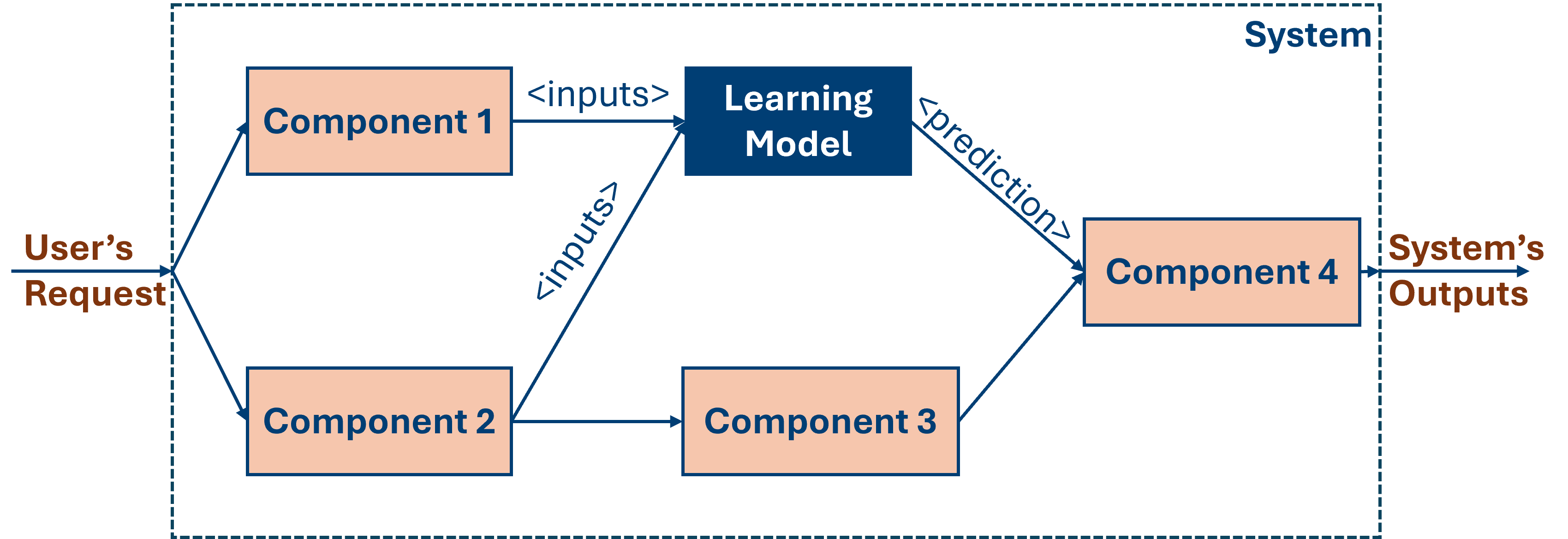

AI-based software systems are data-driven. Unlike in traditional systems, developers cannot fully predefine their behaviour. ML components learn such behaviour from data, operating as black boxes that propagate uncertainty into complex software.

The Systems View

AI-based software systems are data-driven. Unlike in traditional systems, developers cannot fully predefine their behaviour. ML components learn such behaviour from data, operating as black boxes that propagate uncertainty into complex software.

Intellectual debt

Intellectual Debt: Practitioners deploy data-driven systems that work in practice, but do not fully understand their inner workings. This threatens transparency, safety, and trust, increasing risks of AI's negative social impact (Zittrain, 2022).

The Systems Engineering Approach

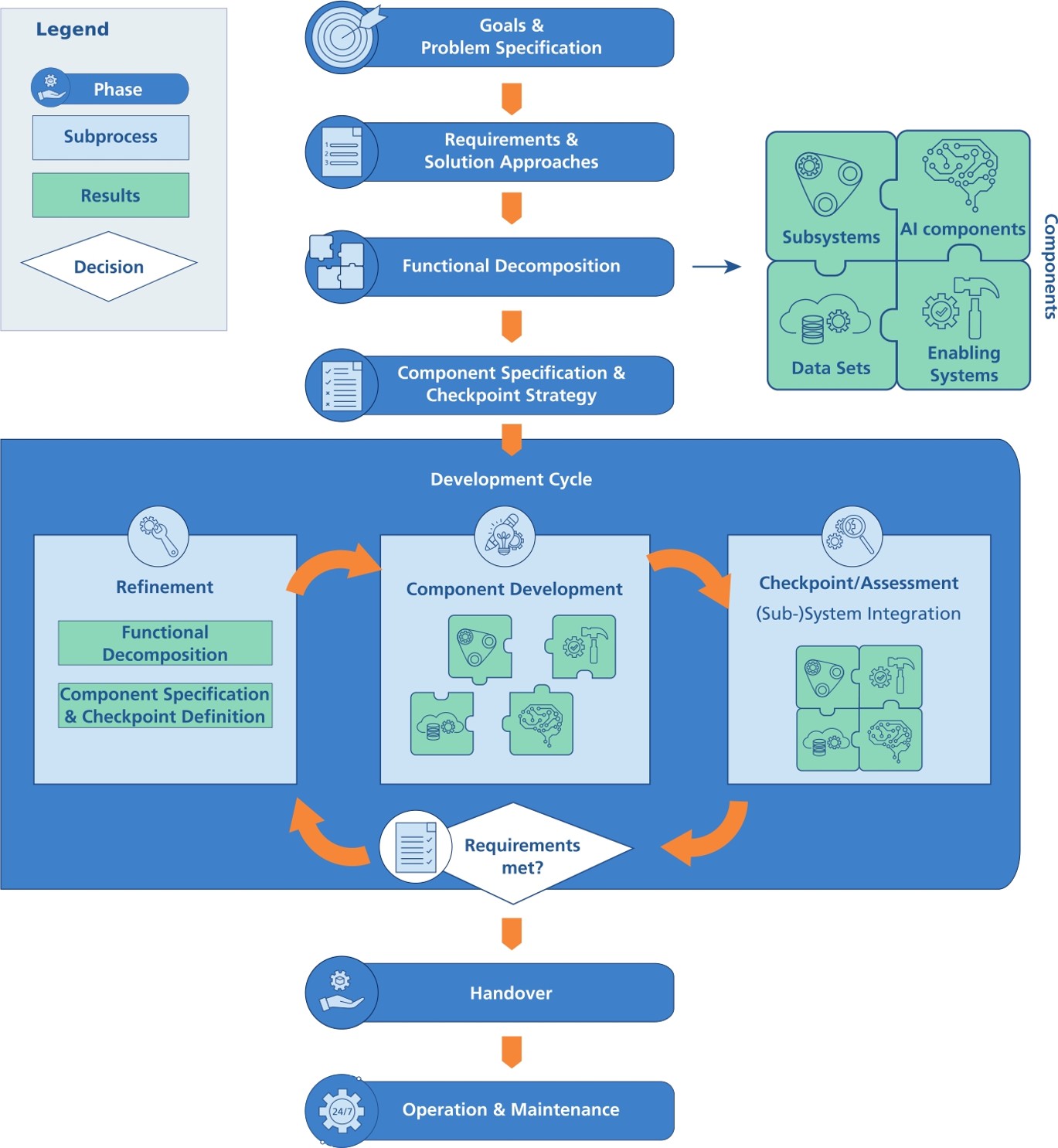

The Systems Engineering Approach

The systems engineering approach is better equipped than the ML community to facilitate the adoption of this technology by prioritising the problems and their context before any other aspects.

The Systems Engineering Approach

The Systems Engineering Approach

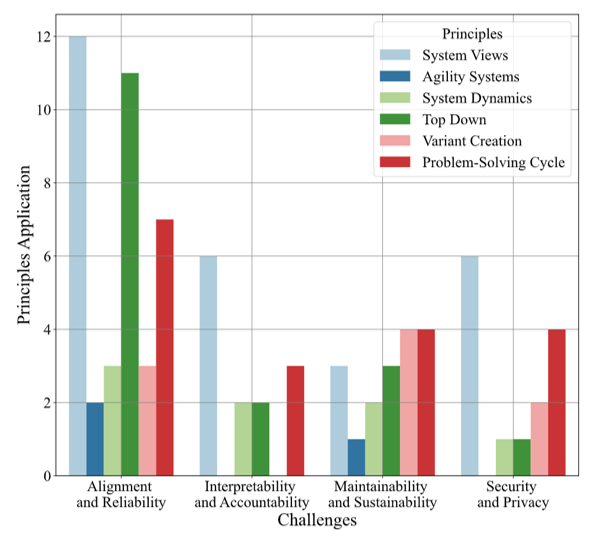

A survey of research works that apply systems engineering principles to address these challenges when deploying AI-based systems.

The Systems Engineering Approach

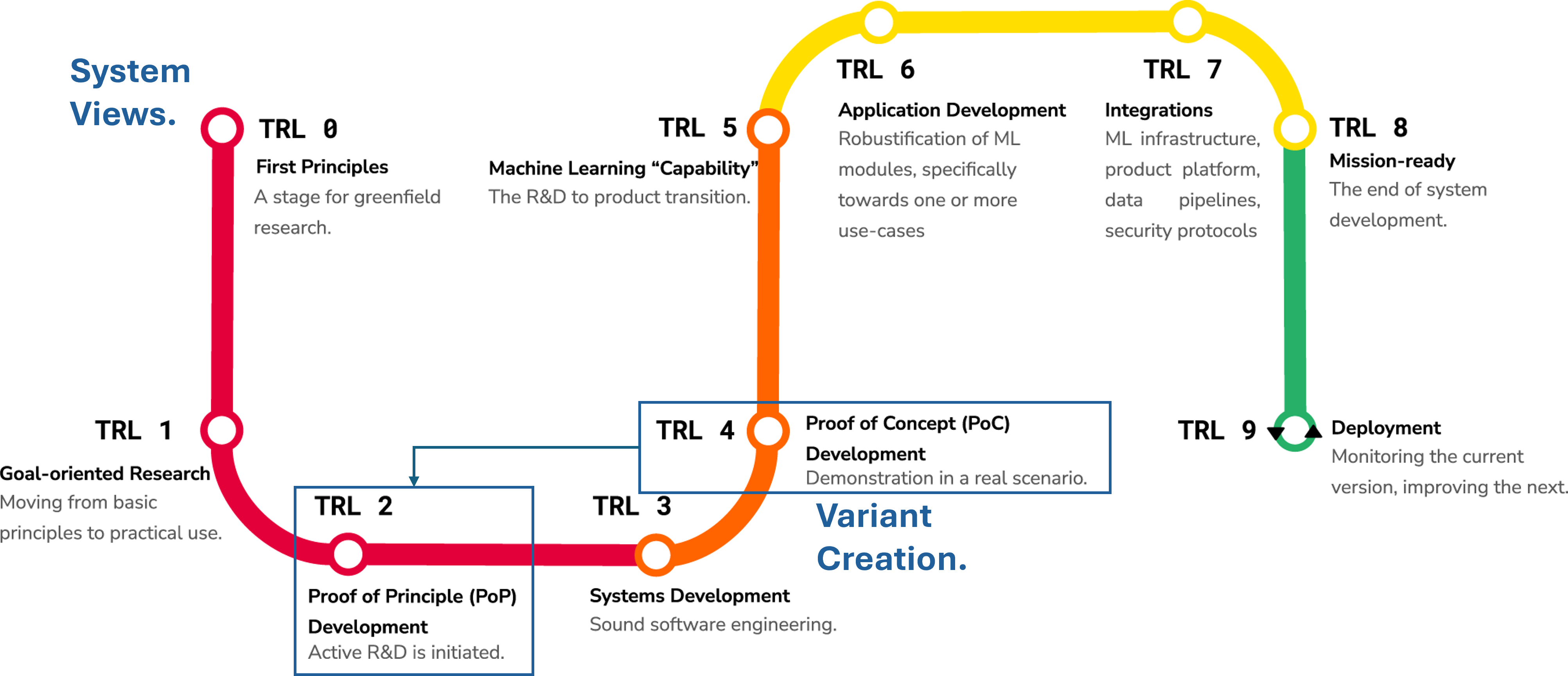

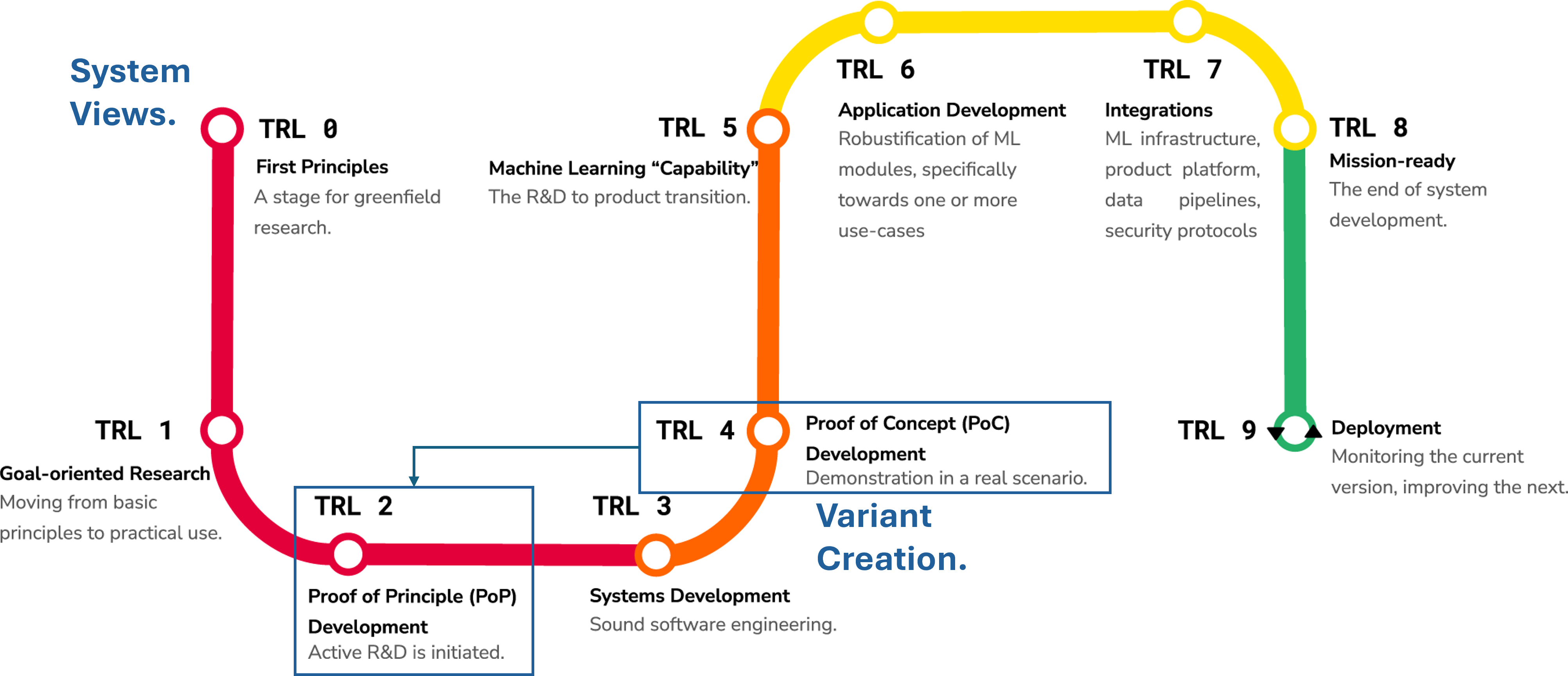

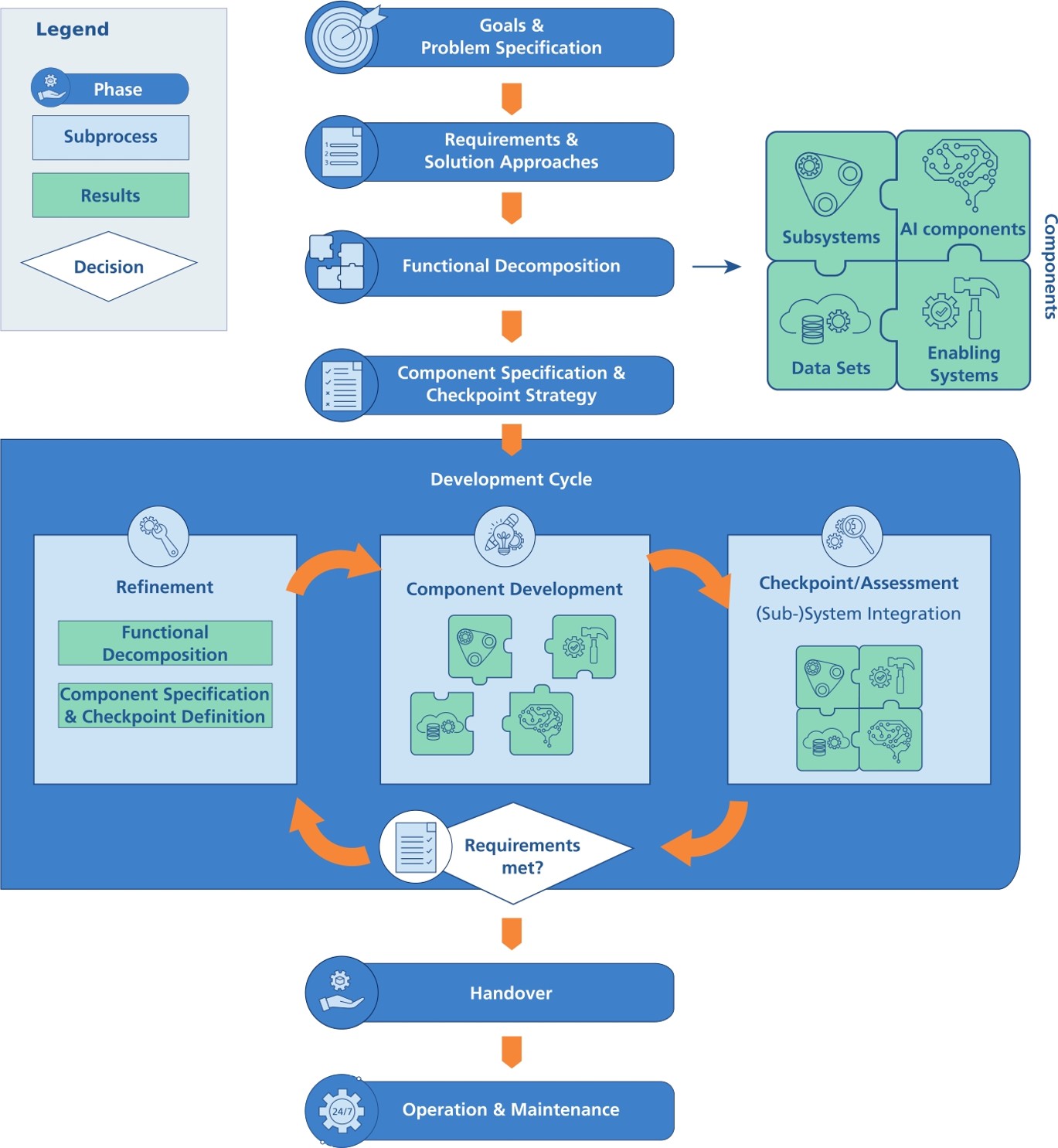

MLTRL - Technology Readiness Levels for Machine Learning Systems

Learn more at (Lavin et al., 2022)

The Systems Engineering Approach

The Systems Engineering Approach

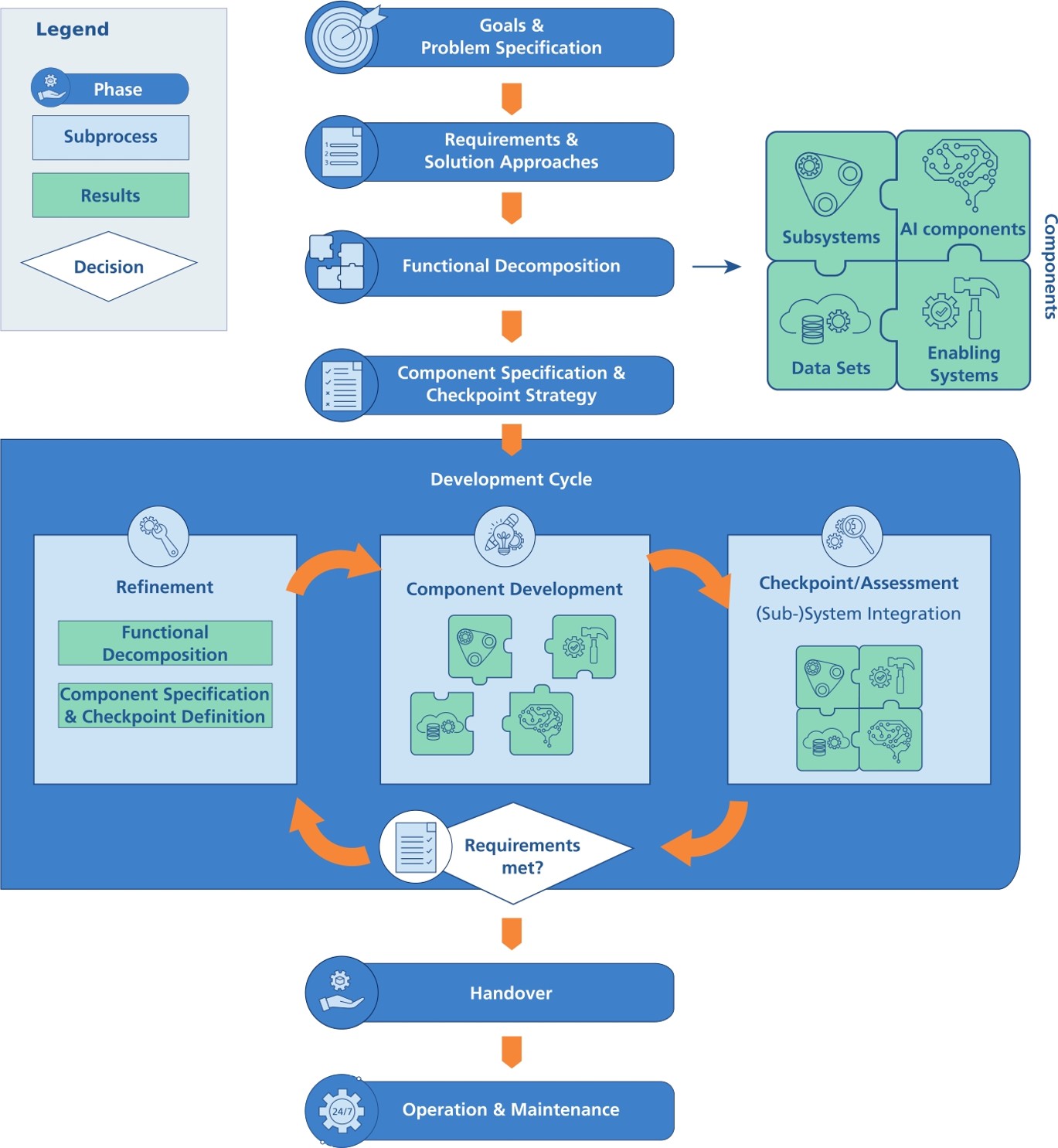

PAISE® – Process Model for AI Systems Engineering

Learn more at (Hasterok & Stompe, 2022)

The Systems Engineering Approach

The Systems Engineering Approach

Results contrast with the way we work today.

"Move Fast and Break Things" (Zuckerberg, 2014)

- Move fast and deliver working software

- Embrace failure as a learning opportunity

- Prioritise speed and agility

- ...

The Systems Engineering Approach

Results contrast with the way we work today.

"Move Fast and Break Things" (Zuckerberg, 2014)

- Move fast and deliver working software

- Embrace failure as a learning opportunity

- Prioritise speed and agility

- ...

The Systems Engineering Approach

Inserting ML components in our software systems lowers the bar for these systems to be qualified as critical systems. Learn more at (Cabrera et al., 2025)

We need to be careful when designing, developing, deploying, and decommissioning ML-based systems.

VibeSafe

VibeSafe

Vibe coding is a software development practice assisted by artificial intelligence (AI) and based on chatbots (programs that simulate conversation). The software developer describes a project or task in a prompt to a large language model (LLM), which generates source code automatically. According to Wikipedia's article on Vibe coding.

VibeSafe

Intellectual Debt: Practitioners deploy data-driven systems that work in practice, but do not fully understand their inner workings. This threatens transparency, safety, and trust, increasing risks of AI's negative social impact (Zittrain, 2022).

VibeSafe

VibeSafe is a collection of standardized project management practices designed to promote consistent, high-quality development across projects. This is an open source project developed by Neil Lawrence and available in a GitHub repo.

VibeSafe

I have been using VibeSafe for developing the DOAgent project

The goal is to develop a Python library to addreess the intellectual debt problem in Multi-Agent Systems (i.e., Agentic AI).

Conclusions

Conclusions

- The scientific method provides the way to objectively assess the AI narrative. The "Technocentric" view is problematic because it ignores the real-world context. In contrast, the Systems View allows us to identify the technology limitations (e.g., Intellectual Debt).

- The Intellectual Debt can impact our work as software developers while vibe coding. The systems engineering most basic principles are key to address this issue by following methodologies that focus more on requirements, planning, and documentation stages.

- VibeSafe is an example of this approach. Should Katary implement its own?